Design Thinking for Software Quality Professionals

While the foundations of Design Thinking have been around for six decades, it’s a much more recent phenomenon in the software development space.

And an even more recent phenomenon to have landed and really taken root in our industry which for the sake of consistency in this article will be referred to as Software Quality Engineering (SQE). Traditionally, Quality Engineers felt like their value add to organizations was helping to ensure that software was delivered right. Quality (and success for that matter) was measured by tests passing, minimal defects, and solid requirement coverage. To be sure, these are lofty goals and are positive outcomes on any development project. But every discipline that is worthy of its staying power needs to expand, evolve, and improve - SQE is no different.

A lot has been said and written about software testing taking on a more technical focus, using complex frameworks and tools to increase both the test case count and the speed they can be run. And rightly so. The value add that good automation brings to the quality of software is indisputable. But not every tester wants to live in code. And I would argue that not every tester should live in code. At the end of the day, the highest quality software that you will ever use is not the website that was build right per the requirements. Or the mobile app with no glitches. On the contrary – the highest quality software that you could ever use is the software that intuitively meets your needs. Easy to say, hard to do? Sure. But this is where Design Thinking can help.

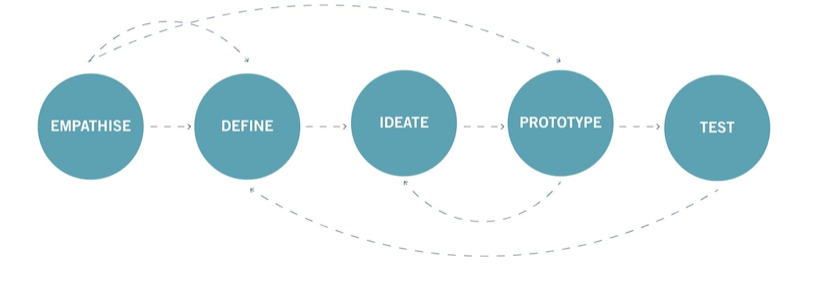

Just like test automation continues to evolve and improve, manual testing is also evolving and expanding scope to include new disciplines like persona-based testing and user-centric reporting. Design Thinking is a bit unique in that it doesn’t prescribe a formulaic structure or process – but instead is a hybrid blend of ideology and frame of mind which aims to solve complex (and wicked!) problems in a highly user-centric way. Design Thinking is encompassed within five non-linear areas which will likely be familiar to anyone who has researched this area. SQE professionals will naturally feel a much stronger connection to some of these areas more than others. But it’s important to understand the entire model in its context to be most effective in our part of the process but also seek new areas of influence.

The Design Thinking Mindsets:

1. Empathize

While persona-based testing won’t fit in every context, it can be a very helpful and worthwhile exercise that is largely under-utilized by software quality teams. SQE personas are used to get inside the head of the end-users to come up with realistic and priority driven test scenarios and flows in the context of the end user.

Side note: There are some tricks and techniques (and pitfalls to look out for!) to building effective personas for testing. Be on the lookout for future blog posts dealing more in depth with this topic or reach out to tapQA to chat more about it!

2. Define

Building personas to build empathy with our users is great, but at some we need to step back into our own lives and make sense of our findings and wr. Start with asking questions like:

- What barriers are our users up against?

- What’s more important?

- What should we focus on first?

- What patters are we observing?

The answer to these questions can be used to build opportunity statements! Opportunity statements are a collection of uniquely formatted descriptions of what the product team is enabling a customer to do that includes a real customer experience outcome.

The recommended opportunity statement structure goes like this:

As a [user role & situation] I want to [do something important/motivation] so I can [expected outcome/aspirational goal].

Example Opportunity Statement:

As a tester of a healthcare payor claims system who tests health rules, I need an on-demand method of receiving realistic inbound claims in an EDI format to fulfill the testing requirements of this project.

Now – the area of opportunity statements is not and should be owned by the quality assurance team. Remember – cross departmental collaboration is a core tenant of design thinking and organizations are better off the earlier they involve quality professionals.

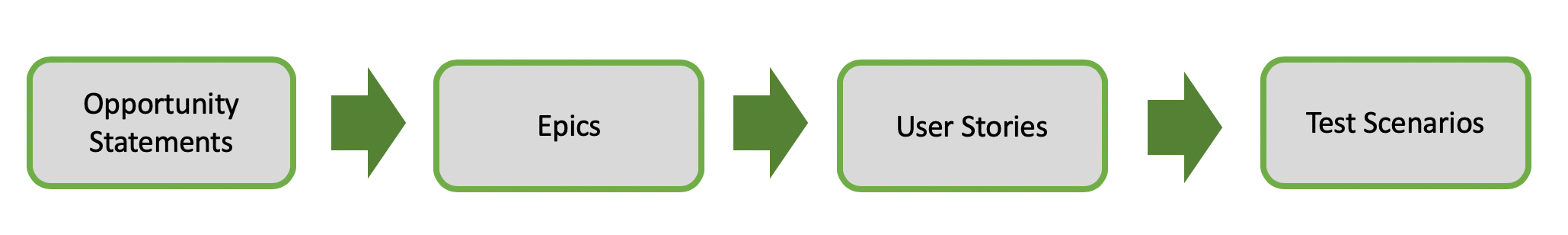

This graphic below represents the hierarchy of how where opportunity statements could fit into a project cycle.

3.Ideate

Now we know the problem to solve and who we’re solving for, now it’s time to start thinking of how we’re going to solve it. There are many ideation (brainstorming) techniques and some are going to be more effective than others just depending on individual style. But one way that seems to be a natural fit for folks in software quality is the “how might we” method. Thinking in terms of “How might we” breaks the opportunity statement into actionable segments and suggests a solution is possible. It helps guide brainstorming but leaves the door very open.

Using the “How Might We” method:

- Start by looking at the opportunity statements that you’ve created in the “Define” stage. Can you apply “how might we” to uncover a start toward a solution?

- The goal is to find opportunities for design, so if your insights suggest several “How Might We” questions that’s great.

- Now take a look at your "How Might We" question and ask yourself if it allows for a variety of solutions. If it doesn’t, broaden it. Your "How Might We" should generate a number of possible answers and will become a launchpad for your Brainstorms.

- Finally, make sure that your "How Might We’s" aren’t too broad. It’s a tricky process but a good "How Might We" should give you both a narrow enough frame to let you know where to start your brainstorm, but also enough breadth to give you room to explore wild ideas.

4. Prototype

Prototyping is a scaled down version of our “product” that we can bring to Test – it can be physical or non-physical! Remember, we are applying these Design Thinking methods to our world of software quality that are also used in other industries such as manufacturing. While terms like “prototyping” isn’t common in our world, the concepts of fail fast and minimal viable product can be useful to empower teams to drive to action and that failing is OK. In fact, it’s cheaper to fail earlier and that includes QA teams trying out new tools, processes, standards, etc…

It’s important to go into prototyping with the expectation that our solutions may be accepted or rejected, improved, or changed into an unrecognizable version of its current state. Ultimately, it's a cheap way to get something out there and you can start getting reactions and measuring effectiveness.

5. Test

First, throw out everything that comes to mind when you hear “test”. In Design Thinking, “test” is not meant to imply looking for defects. Instead it’s about putting your prototype in front of the users. It’s about seeing if this product/process/whatever works for them, tying all the way back to the empathizing mindset. Remember that Design Thinking is a non-linear process. With these five “mindsets” of Design Thinking, you don’t need to follow in sequence even though there are some naturally common sequences that arise. It is very possible that findings uncovered in the Test phase send us way back to a much earlier mindset like Empathize and Define. But Design Thinking favors action and an improved prototype to bring into test can happen quickly with a team that collaborates effectively in a judgement free zone.

Now to give justice to Design Thinking, I realize that for the interest of brevity I covered a very powerful subject very lightly. The intent of this post is not meant to be an exhaustive look at the place of software quality in Design Thinking. But my hope is that it helped show some of the connection and spark some insights into how you can effectively use Design Thinking concepts to evolve the testing in your organization to become more customer centric.

Persona Testing & Chat Bot Success

In early 2020, tapQA started working with a company that builds and trains AI driven chat bots to enhance the user experience on their customers' websites. Our client was reaching out for testing help because they were not happy with the quality of answers the bots were returning once released in production and open to the public. The bots displayed correctly on the page and had factual responses to the most common and generic questions asked. But for many users that were looking for information in the context of what was most important to them, the bot wasn't equipped and ended up frustrating the users.

tapQA's solution was to use use the persona testing method. To accomplish this, tap|QA identified 4-6 specific types of users that best suited the core demographics of the website users and built empathy points with these users by assigning motivations, frustrations, technological savviness, etc. Then we assigned a tester to spend a couple a couple of hours thinking of questions to ask the chat bot based on the context of the user profile. Once the bots were trained to answer the questions that came out of the persona exercises, they became far more effective in providing relevant answers asked by users in the "real world."

Could Design Thinking be right for your team?

Our team loves design thinking. Reach out and schedule a free consultation with one of our QA experts - we'd love to chat about how design thinking can help you.

Josh Brenneman

Josh Brenneman is the Delivery & Talent Director at tapQA. He has 10+ years of experience delivering value to organizations in the areas of strategic quality, delivery, release management, and testing.

Have a QA question?

Our team would love to help!