4 Test Automation Myths That Haunt Agile Teams

We live in a competitive and fast paced world. New technologies are coming in every day and customer needs are changing rapidly. Gone are the days when it took over a year to release software followed by several months to release additional features. Today, companies are releasing multiple times a day to meet growing customer demands. This puts additional pressure on project teams to meet tight release schedules and deadlines. Thus, there is a need to automate as many processes as possible. This is especially true for automated software testing.

Over the years, the problems and conversations surrounding automated software testing have drastically changed from the lack of enough tools/frameworks to perform automated testing; to when to do automated testing and how it fits in the overall context of the project. The reality is, there are many misconceptions surrounding automated testing and addressing them is the first step towards building a stable automation test framework. Some of the most common misconceptions of automated tests are as follows-

Automation is a replacement for manual testing

Organizations often come to the wrong conclusion that automated testing is a replacement for manual testing. This is not true. Each one has its own value and needs to be used in complementary to each other. While the automated tests are repeating mundane tasks several times a day, testers can use this time to do more exploration on the application using risk-based, exploratory and other testing approaches, and contribute in other areas of the development process. These approaches help to find new vulnerabilities in the application which would otherwise be hard to find using automated testing.

Also, manual testing helps to bring a more humanized approach to testing by focusing on user empathy and using the application like how a customer would perceive it. This cannot be replaced by automation. So, it is important to understand where both manual and automated testing fits in the overall context of the project. Both when performed diligently can save considerable time, cost and effort.

Automation is a “Silver Bullet” that solves all problems

When teams decide to start doing automation, they are initially excited and have robust plans for building the automation framework. But, as they make progress in writing more automated tests, the realization slowly starts to kick in that these tests are not a “one size fits all” solution to fix all testing problems. Saying automated tests can solve all problems is like saying we can do 100% exhaustive testing. It is close to impossible. Teams need to have realistic expectations and goals with automation. They need to think about what problems they are trying to solve, what is the cost vs value of doing automation and what their end goal is to measure automation effectiveness and progress.

Automation should have 100% test coverage

Just because something can be automated does not mean it is the right scenario to automate. There are many scenarios not worth automating due to multiple factors such as complexity, flakiness, system dependencies, slow response times and much more. For example, say there are dynamically changing images on a web page and you want to validate whether they are the correct images and render appropriately on different browsers and screen sizes, automation could be a possible option but the time spent on performing automation for these scenarios outweighs the benefits. It is hard to ensure the images render correctly on different screen sizes via automation as we may have to deal with figuring out x/y coordinates of elements which introduces flakiness in the test. Also, it is difficult to validate if the correct image is displayed without introducing a certain level of complexity in the automation code. Instead, a group of testers could prioritize the top screen sizes to test for and manually check if the correct images are displayed in the appropriate screen sizes. So, the main goal should be to automate the right scenarios instead of focusing on test coverage.

Automation can be packed into one single test suite

One of the biggest problems of having automated tests is maintaining them. There is a significant cost associated with it. As the size of the automated suite grows it gets harder to maintain them and ensure they are working as expected. In addition, teams choose to have one single automated test suite and run them during every code check-in. This is fine if there are only a few tests in the suite but if you have hundreds of tests, then it takes a long time to get feedback on the application. As a result, teams stop trusting these tests and start ignoring them.

One solution is to build a flexible automation framework and separate the test execution to run them periodically; during the different phases of the development process. For example, we could have smoke tests that run on every code check-in that gives quick feedback about the system. They usually should finish running within five to ten minutes. Then, have regression tests that run periodically once or twice a day and it helps to identify if any new functionalities of the system broke existing functionalities. This typically takes longer to finish running compared to smoke tests as they may cover more end-to-end flows.

Automated testing has drastically evolved over a period of time. The tools and frameworks are more advanced and have more capabilities than what we saw a decade ago. Given this situation, it is a good practice to pay attention to these common misconceptions in automation before coming up with a good automation test strategy and building tests.

Raj Subrameyer

by Raj Subrameyer, February 29th, 2020

tap|QA is excited to welcome Raj Subrameyer as our consulting Quality Evangelist. Raj is an internationally renowned speaker, writer and tech career coach, with an expertise in many areas of Software Testing. More info about him can be found at – www.rajsubra.com

Digital Transformation to Help You Achieve Agility

Today’s rapidly changing business environments – driven by innovation, digitization, and sheer increases in processing power – are direct byproducts of the digital transformation of the late-20th century. Now, organizations…

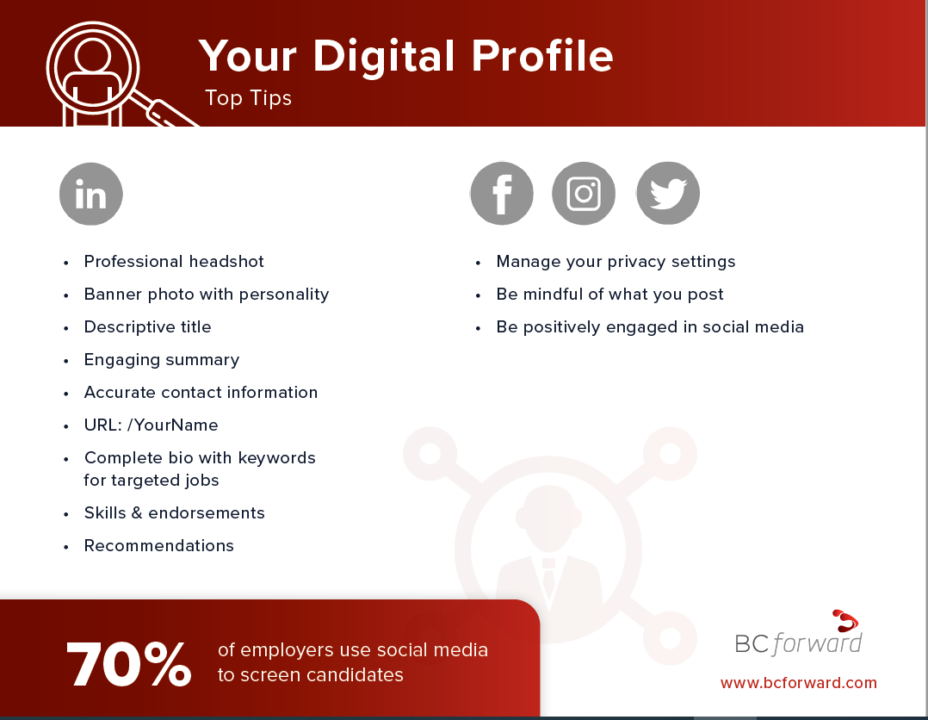

WEBINAR | tapQA Presents: Digital Profile

As we progress through almost an entire year of living in a pandemic the world around us has digitized almost, everything. But have you done this for yourself? In a…

Data-Driven Decision Making (DDDM): How You Are a Data Analyst and Don’t Even Know It

Every day we are faced with a multitude of decisions that are subconsciously being determined by data. Where is the cheapest place near me to buy gas? How busy is…